Motivation

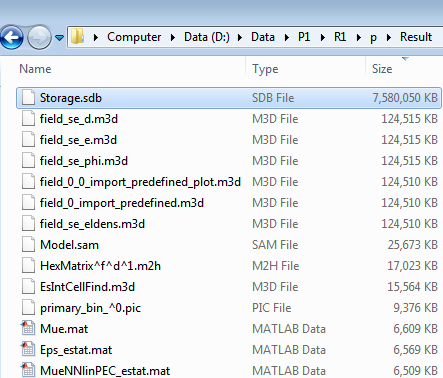

I am processing the result from a large simulation with CST Particle Studio. The

simulation took weeks on a high-performance server and was stuck for unknown

reasons. Fortunately, I was able to copy the last state of this simulation from

the server at the moment when it got stuck. Since my model has a large number

of objects and lumped elements, as the following pictures show. Although each

1D diagram was not huge at all (~20k data points per diagram), it took about

ten minute to open and view a 1D result in CST, not to mention that I have

thousands of diagram to view and export.

Besides, the view of particle trajectories in a large TRK simulation is painful or impossible, especially when there are large number of particles or lots of push steps. I wished a direct access to the raw data, so that I could decimate or filter the data from the most original source.

CST provides an official C API provided via CSTResultReader.DLL to load the

1D results externally.

C:\> dumpbin /EXPORTS CSTResultReader_AMD64.dll

ordinal hint RVA name

...

7 6 005F0080 CST_Get1DRealDataAbszissa

8 7 005F0110 CST_Get1DRealDataOrdinate

9 8 005EE220 CST_Get1DResultInfo

10 9 005EE190 CST_Get1DResultSize

11 A 005EFA20 CST_Get1D_2Comp_DataOrdinate

...

Internal structures of CST 1D results

After several minutes exploration in the results folder of the CST project. The

file Storage.sdb seems suspicious.

A closer look shows that file is a SQLite database,

A closer look shows that file is a SQLite database,

$ sqlite3 Storage.sdb

sqlite> .tab

DataTables SigData2 SigData5

MetaData SigData3 SigHeader

SigData1 SigData4 SigMetaBlobData

SigHeader stores the name of the datasets:

sqlite> .sch SigHeader

CREATE TABLE SigHeader (sig_id INTEGER PRIMARY KEY,name TEXT,choice INTEGER,domtype INTEGER,domdim INTEGER,codomtype INTEGER,codomdim INTEGER,table_id INTEGER);

CREATE UNIQUE INDEX find_signal ON SigHeader ( name, choice );

sqlite> .h on

sqlite> select sig_id, name, table_id from SigHeader limit 10;

sig_id|name|table_id

9|signal_default_lf.sig|1

10|signal_default.sig|1

11|Alumina (96%) (lossy)_eps_re.sig|1

12|Alumina (96%) (lossy)_eps_im.sig|1

13|Alumina (96%) (lossy)_eps_tgd.sig|1

14|primary_interface_current_pic.sig|3

15|primary_interface_power_pic.sig|3

16|RefSpectrum_pic.sig|2

17|Wave-Particle_Power_Transfer.sig|4

18|usWall3[b]R_Top(pic).sig|1

Taking my problem as example: in my case, I want to process all collision currents on the objects.

sqlite> select count() from sigheader where name like 'PICCollisionInfo_Current_%';

3179

Moreover, I know that all data I need are located in table 3.

sqlite> select distinct(table_id) from sigheader where name like '%Collision%';

3

SigData3. Indeed, the intuitive guess was correct.

sqlite> select count() from sigdata3;

206321500

sqlite> select * from sigdata3 where sig_id in (select sig_id from sigheader where name like 'PICCollisionInfo%Current%Wall%' limit 1) limit 10 offset 5000;

sig_id|dom|codom

4690|34.120501743389|18.4575171960433

4690|34.1273258437377|18.5149683176805

4690|34.1341499440864|18.6246463171396

4690|34.1409740444351|18.4578551260741

4690|34.1477981447838|18.5667023734227

4690|34.1546222451324|18.6437357178942

4690|34.1614463454811|18.8157773486297

4690|34.1682704458298|18.7838105979049

4690|34.1750945461785|18.7335833887706

4690|34.1819186465272|18.6712175842331

dom column is the time, and

codom is the current I need. Additionally, there are some hints

about axis in the text file Model.res inside the CST result

folder.

Example for loading and processing the CST results directly

Below is an example, where the sum of the net (impaction + emission) current on each object is calculated using a python script.

#!/usr/bin/env python3

import sqlite3

import numpy as np

def query(c, glob):

ids = c.execute(

"select sig_id from sigheader where name like ?;", [glob]

).fetchall()

r = None

for i in ids:

p = np.array(c.execute(

"select dom, codom from sigdata3 where sig_id = ? order by dom;", i

).fetchall())

if r is None:

r = p

else:

r[:, 1] += p[:, 1]

return r

if __name__ == "__main__":

c = sqlite3.connect("Storage.sdb")

collision = query(c, "PICCollisionInfo%Current%")

emission = query(c, "PICEmissionInfo%Current%")

np.savetxt("current_collision.txt", collision)

np.savetxt("current_emission.txt", emission)

total = np.copy(collision)

total[:, 1] += emission[:, 1]

np.savetxt("current_total.txt", total)

$ time ./sum_current.py

real 2m37.889s

user 2m34.964s

sys 0m2.916s

$ wc -l *.txt

16310 current_collision.txt

16310 current_emission.txt

16310 current_total.txt

48930 insgesamt

Improve CST response time

The original file has 4.7 GB and dumping a curve directly from sqlite takes 18 seconds, while showing this diagram in CST takes ten minutes.

$ du -h Storage.sdb

4,7G Storage.sdb

$ time echo "select * from sigdata3 where sig_id in (select sig_id from sigheader where name like 'PICCollisionInfo%Current%Wall%' limit 1);" | sqlite3 Storage.sdb > /dev/null

real 0m18.150s

user 0m16.700s

sys 0m1.448s

SigData3, which would take about 2 minutes and will increase

the size of the database to 7 GB.

$ time echo "create index sigdata3_sig_search on sigdata3(sig_id);" | sqlite3 Storage.sdb

real 1m44.019s

user 1m26.576s

sys 0m10.672s

$ du -h Storage.sdb

7,0G Storage.sdb

After the indexing the 206321500 entities, dumping the same curve from the database is more than 1800 times faster.

$ time echo "select * from sigdata3 where sig_id in (select sig_id from sigheader where name like 'PICCollisionInfo%Current%Wall%' limit 1);" | sqlite3 Storage.sdb > /dev/null

real 0m0.055s

user 0m0.052s

sys 0m0.000s

Conclusion

Several versions ago, CST switched the storage of results to SQLite. This article tried to figure out the interior of the database and gave an example of processing the result data directly from the disk file.

Furthermore, indexing the SQLite tables will accelerate the response of the CST GUI from minutes to less than one second. This indexing trick might also work on the trajectory plot in CST GUI, but I haven't tested it yet. I recommend CST developers to consider indexing these data by default.

The benchmarks are done with CST Particle Studio version 2016.

Update 2017-10-23

Currently I have to process a 1.4 TerryBytes PIC results with monitors, where

the 1D results in Storage.sdb has more than 300 Gigabytes

$ du -h Storage.sdb

347G Storage.sdb